Portability matters. But the real reason teams stay stuck is fear of what a model change will break.

Twenty-five years ago, Sun Microsystems sold developers a dream: "write once, run anywhere." Java promised an escape from OS-specific binaries. Software has always chased portability, but the portability problem has moved up the stack. Containers largely solved environment portability. The hard question now is whether an AI application can survive changes in model provider, storage backend, and cloud environment without turning every migration into a rewrite.

Most teams do not feel locked in during the prototype, rather they feel it with changes in pricing, rate limits, security requirements, or model upgrades. By then, "we can switch later" usually means unwinding provider-specific assumptions that leaked into prompts, workflows, storage, and deployment. What looks like a straightforward integration turns into long-lived technical debt.

The obvious answer is abstraction, but abstraction alone is not enough.

Swapping an API client is the easy part. The challenge is trusting the behavior on the other side. A new model can be cheaper and faster and still fail where it matters: edge-case reasoning, JSON reliability, tool calling, latency, or tone. That is the lock-in teams actually feel. Not "we literally cannot change vendors," but "we do not trust what will happen if we do."

Keep Business Logic Stable, Keep Infrastructure Configurable

We built Planar around a simpler idea: keep business logic stable while model and infrastructure choices remain configurable. Planar is a Python framework for durable workflows, agents, and stateful APIs. It separates provider selection from workflow logic through environment-aware config, supports multiple model providers and storage adapters, and lets customers deploy in their own cloud (BYOC) rather than ours.

In practice, that means a provider switch should look more like a controlled config change than a codebase rewrite. A simplified version of that pattern looks like this:

ai_models:

providers:

aws_bedrock: ...

azure_openai: ...

models:

default_model:

provider: aws_bedrock

model: opus-4.7The important part is the boundary the YAML config creates: workflow logic on one side, provider selection on the other.

The same separation should hold outside model access. Workflow code should not care whether files land on local disk, S3, or Azure Blob, and it should not have to absorb every cloud-specific deployment choice just to keep running. Those are platform decisions that should stay platform decisions.

storage:

backend: s3

region: us-west-2

bucket_name: ${S3_BUCKET_NAME}

secrets:

providers:

aws_prod:

provider: aws_secrets_manager

custom_secrets:

api_password: ${secret:aws_prod:${CUSTOM_SECRET_NAME}:API_PASSWORD}Portability is Only Half the Story

Still, portability is only half the story. If you want the ability to actually change vendors, you need a disciplined way to evaluate behavior before you flip the switch. Code is deterministic. Models are not. A provider change can alter output shape, response quality, latency, and failure modes without changing a single line of application code.

That regression risk is what makes this challenging.

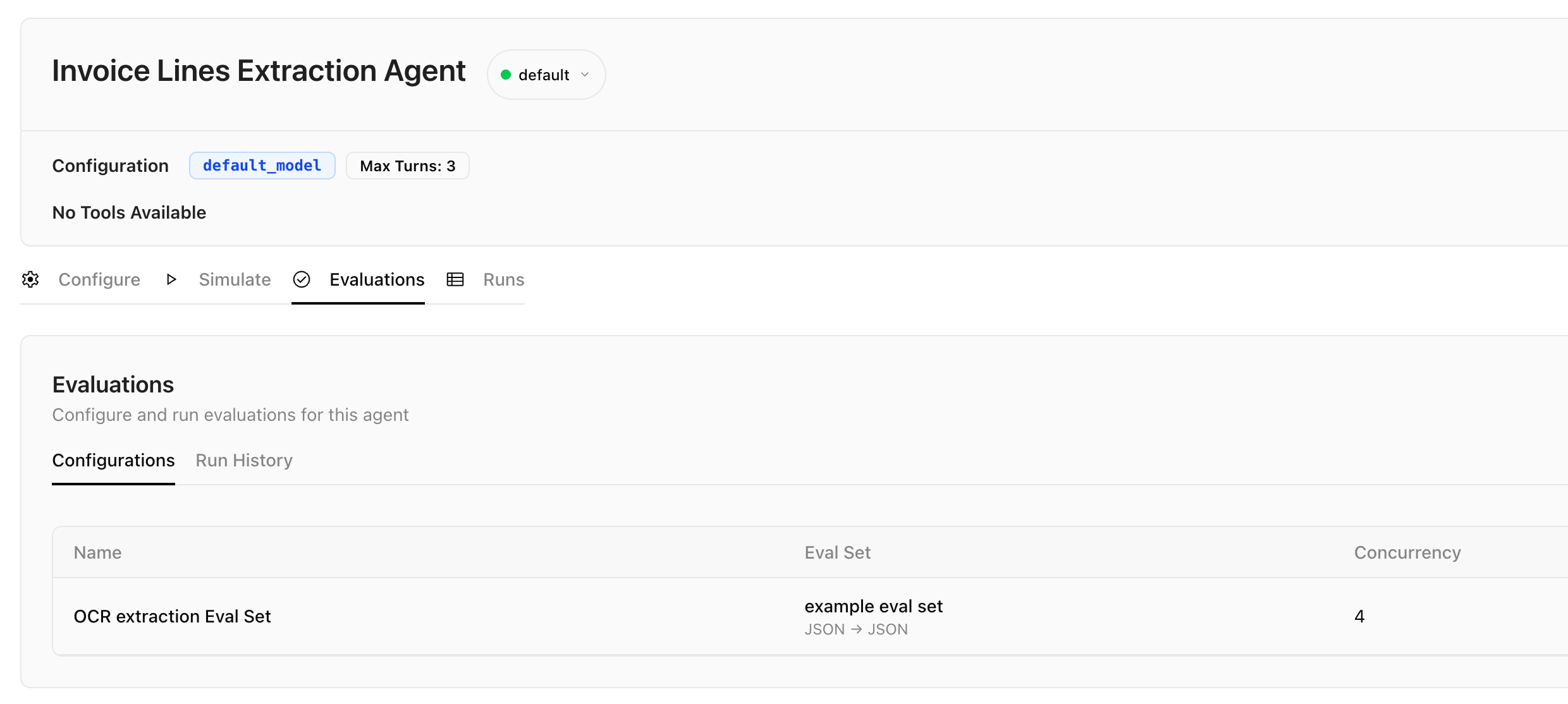

The answer is not to pretend models are interchangeable. They are not. And an abstraction layer cannot accommodate all of the differences in reasoning quality or behavior. What it should do is isolate those differences, make them measurable, and keep them from leaking all over your system. In practice, that means pairing portability with evals: representative inputs, expected outputs, edge cases, and business-specific pass/fail criteria. When a new model clears that bar, you can move with confidence. When it does not, you learn that before production learns it for you.

Vendor Agnostic, Defined Properly

That is the standard we think teams should hold. "Vendor agnostic" means keeping workflow logic durable, keeping infrastructure choices configurable, and making model changes testable.

Planar is built to hold that line. Workflow logic stays stable, infrastructure stays configurable, and model changes go through evals before they go to production. The goal is not to abstract away differences between providers. It is to make those differences visible and testable so your team can move between them without guessing.

To see these ideas in practice, you can install the package via uv and test the local execution environment today.